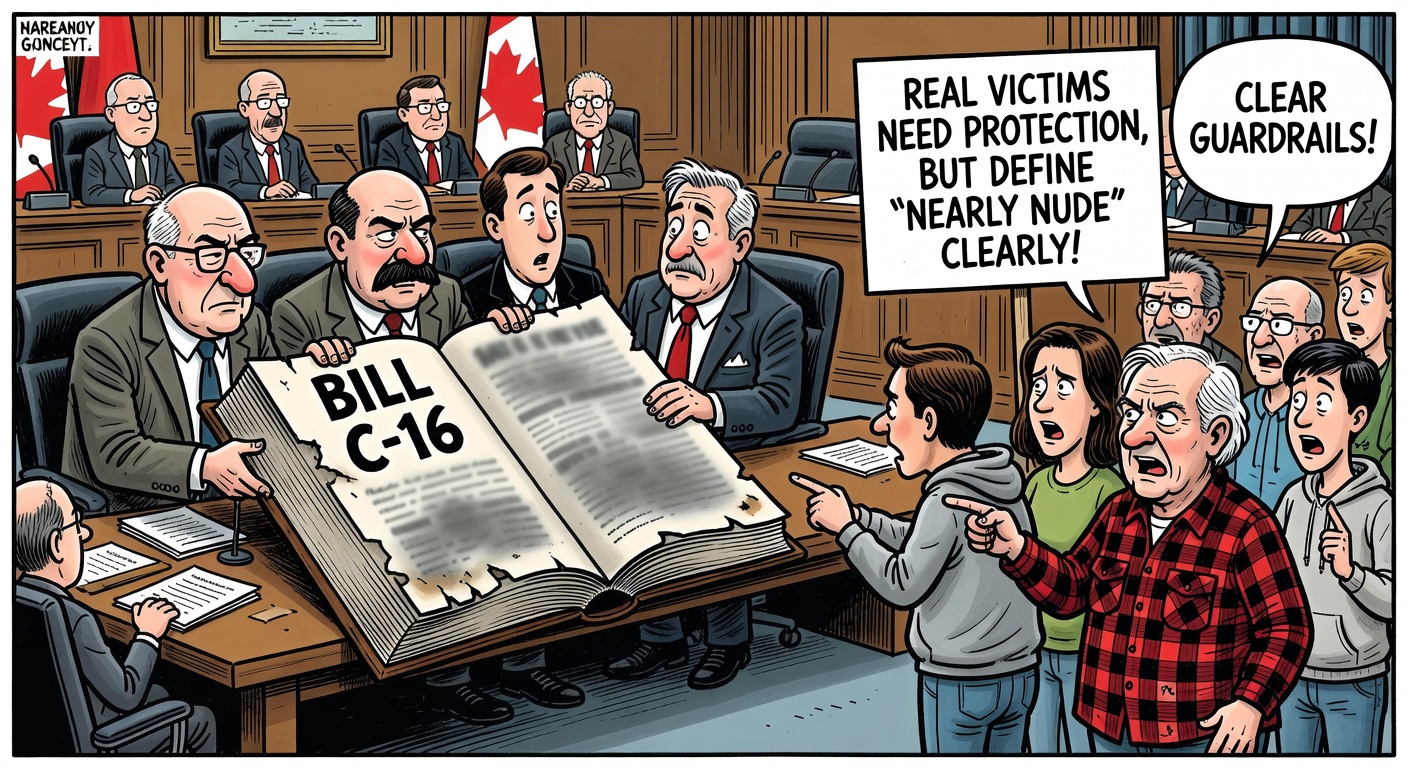

Bill C-16 Needs a Definition Before It Becomes Another Online-Control Law

Sexual deepfakes are real abuse. That is exactly why Parliament must write a precise law — not another broad Liberal online-control power with undefined edges.

A House of Commons committee has amended Bill C-16 so Ottawa’s proposed sexual-deepfake offence would cover “nearly nude” images. The change responds to a real problem: AI tools can now manufacture humiliating sexualized images that may not show full nudity but are still designed to degrade, intimidate or exploit an identifiable person.

That harm should not be minimized. Victims deserve fast takedowns, platform accountability and a criminal law that reaches genuinely abusive non-consensual material. But serious harm is not a blank cheque for sloppy drafting. When Parliament expands the Criminal Code into online images, Canadians are entitled to exact definitions, narrow scope and clear safeguards.

The original Bill C-16 text already proposed to expand the “intimate image” offence to include electronic visual representations that depict an identifiable person as nude, exposing sexual organs or engaged in explicit sexual activity, where the depiction is likely to be mistaken for a real recording. Global News reports the justice committee has now added “nearly nude” language after experts warned the first version might miss sexualized Grok-style images. Liberal MP Patricia Lattanzio, parliamentary secretary to Justice Minister Sean Fraser, said the amendment clarifies the offence and responds to victims’ needs. Bloc MP Rhéal Fortin objected that “nearly nude” was not specific enough.

That objection matters. “Nearly nude” may sound obvious in a headline, but criminal law cannot run on vibes. Does it cover swimwear? A transparent costume? A crude cartoon? A political meme? A medical, artistic or journalistic image? If the answer is “trust prosecutors,” the law is not ready. The more emotionally charged the subject, the more important it is to separate true abuse from speech and expression that should not carry criminal risk.

Even the National Association of Women and the Law, which urged Parliament to broaden the definition of deepfakes, framed its brief as an effort to strengthen protections while avoiding unintended harms. Its proposal shows the hard work Parliament should be doing: defining the image, the sexualized context, the identification of the person, the consent problem and the platform response in language courts can actually apply.

Bill C-16 is also not a small stand-alone deepfake bill. Parliament’s own summary describes a large criminal and corrections package covering coercive control, femicide-related provisions, mandatory minimum penalties, restorative justice, sexual-offence procedure, delay rules and more. That makes scrutiny even more important. Broad omnibus justice bills are where governments bury vague powers and dare critics to look anti-victim.

A conservative accountability standard is simple: protect victims, punish bad actors, make platforms remove abuse quickly — and define the offence so tightly that ordinary Canadians do not have to guess where the line is. If the Carney Liberals want new online criminal powers, they owe Canadians more than good intentions. They owe them clear law.

Global News / The Canadian Press: Bill to criminalize AI sexual deepfakes will include “nearly nude” images; Parliament of Canada: Bill C-16 LEGISinfo and first-reading text; House of Commons JUST brief: National Association of Women and the Law recommendations.